AI Can Work on Its Own Now. Which Three Skills Should Office Workers Build Next?

Table of Contents

The AI conversation has changed.

A year ago, most people treated GenAI as a better search box or writing helper. Now the real shift is agentic behavior: AI systems that can plan steps, execute tasks, and hand back work that used to take a person half a day.

If your anxiety has gone up recently, you’re not imagining it.

According to NBER Working Paper 32966, as of late 2024 nearly 40% of U.S. adults (18–64) had used generative AI, 23% of employed respondents had used it for work in the previous week, and 9% were using it every workday. That’s not an experimental edge case anymore. That’s workflow-level adoption.

So the right question is no longer “Will AI matter for my job?”

It’s this:

When AI can complete more of the execution layer, what human skills still compound in value?

Why the anxiety spiked now

The biggest reason is simple: AI is no longer only helping with drafts. It’s increasingly helping with outcomes.

OpenAI’s GDPval describes this shift clearly: they built an evaluation across 44 occupations and 1,320 specialized real-world tasks, then compared model outputs and expert outputs in blind reviews. The takeaway is not “humans are obsolete.” The real takeaway is that frontier systems are now good enough in many professional tasks that oversight + orchestration becomes the bottleneck.

That changes salary logic inside companies:

- If your value is “I produce first drafts,” you’re exposed.

- If your value is “I design systems that reliably produce business outcomes,” you get leverage.

Displacement vs. amplification: what the data actually says

People often debate AI in extremes: “It replaces everyone” vs “It only helps.” Reality is mixed.

From NBER 31161 (5,179 customer support agents):

- Average productivity increase: 14%

- Improvement for novice/low-skilled workers: 34%

- Much smaller gains for already top performers

This is the uncomfortable part for many mid-career professionals: AI can compress the experience gap.

Anthropic’s first Economic Index report shows the same mixed pattern at a macro level (source):

- Usage leaned toward augmentation (57%) over automation (43%)

- Roughly 36% of occupations showed AI use in at least a quarter of associated tasks

- Only about 4% of occupations showed use across three-quarters of associated tasks

Their later primitives report adds another important nuance: usage remains concentrated in a relatively small set of high-frequency tasks, and success rates drop as task complexity rises (source).

So no, most jobs are not being “fully replaced” right now. But yes, large parts of many jobs are being re-priced.

Skill 1: Context engineering (not just “prompting”)

Most people still think the core skill is writing a clever prompt.

It isn’t.

The real skill is context engineering: deciding what information, constraints, examples, and quality rules the model gets before it starts work.

That’s exactly why repositories like Agent-Skills-for-Context-Engineering have exploded (about 10,787 stars / 841 forks at fetch time). Teams are realizing that output quality is mostly a context design problem.

What this looks like in practice

Bad request:

“Write a campaign plan for Q2.”

Good request:

“Write a Q2 campaign plan for mid-market B2B SaaS in APAC, based on last quarter conversion data, keeping CAC under X, with our existing tone guide, and include two testable channel experiments.”

Same model. Different context. Different business value.

If you can package intent and constraints clearly, you become the person AI systems depend on.

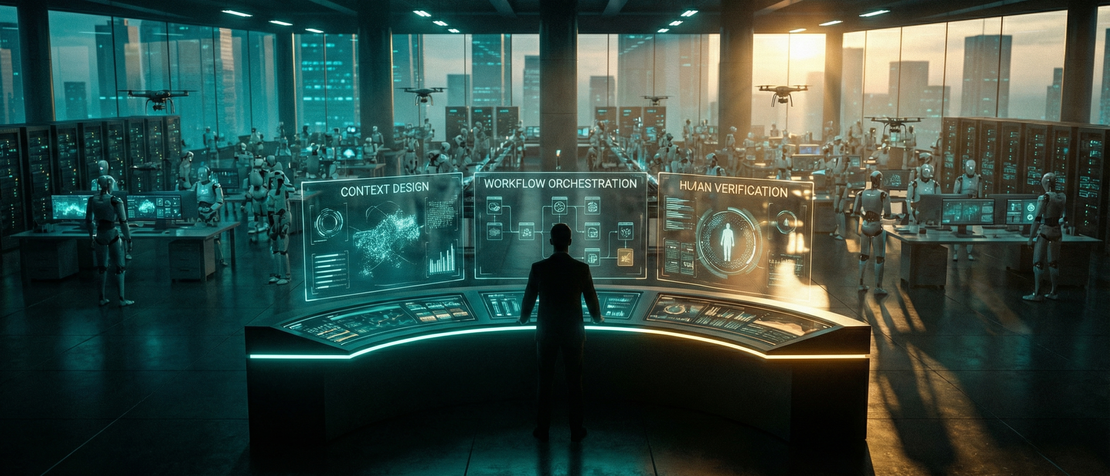

Skill 2: Workflow orchestration (AI engineering for normal teams)

A useful signal came from a recent V2EX thread where a Java backend engineer worried about being moved into an AI department (thread). The discussion was blunt: in most companies, “AI work” means integration and workflow engineering, not training foundation models from scratch.

That matches what we’re seeing broadly.

The scarce skill is not “can you train a model from zero?” The scarce skill is:

- Can you map a real business process?

- Can you split it into agent-friendly steps?

- Can you define fallbacks and human review points?

Example

Take a recurring operations process:

- Collect weekly updates from five systems

- Summarize blockers

- Draft stakeholder memo

- Propose next actions

In many teams, this is still 3–5 hours of manual work. A strong orchestrator builds a flow where AI handles 1–3, while humans own decisions and edge cases.

That’s how you move from “task executor” to “system owner.”

Skill 3: Judgment and verification (the anti-hallucination moat)

As AI takes more execution tasks, human value shifts toward decision quality.

Both GDPval and Anthropic’s work point to the same operational truth: model output can be very strong, but real-world reliability still depends on oversight, especially for ambiguous, high-stakes, or multi-step tasks.

So the third core skill is structured judgment:

- spotting subtle errors quickly

- checking assumptions and missing context

- deciding when to trust, revise, or discard output

Example

If AI drafts a market analysis, a weak reviewer checks grammar. A strong reviewer checks:

- Did we mix correlation with causation?

- Are we reusing stale assumptions from last quarter?

- Are cited numbers internally consistent?

- Are recommended actions constrained by actual budget and team capacity?

In an agentic workplace, “can you verify high-speed output” becomes a premium skill.

Who gets wage upside first, and who gets squeezed first

Likely upside group

- People who can turn fuzzy goals into structured AI workflows

- People who can manage quality at scale (not just produce text)

- People who combine domain context + orchestration + judgment

Likely squeeze group

- Roles defined mainly by repeatable first-draft production

- Teams with weak context hygiene (messy docs, unclear ownership)

- Professionals who treat AI as a toy instead of core infrastructure

The pattern is not “technical vs non-technical.” It’s “system thinkers vs task repeaters.”

A practical 30/60/90-day plan

If you’re feeling pressure, don’t debate AI endlessly. Build capability in cycles.

Days 1–30: Audit and context discipline

- Track your weekly work in 30-minute blocks

- Mark tasks as: repetitive / judgment-heavy / cross-functional

- For repetitive tasks, start using structured context templates (goal, constraints, source docs, quality bar)

Deliverable: one reusable context template for your most common task.

Days 31–60: Build one real workflow

- Pick one recurring process with obvious friction

- Break it into steps AI can handle vs steps humans must own

- Add explicit review checkpoints for risk-sensitive outputs

Deliverable: one working AI-assisted flow that saves at least 2 hours/week.

Days 61–90: Convert skill into visible business value

- Measure before/after cycle time and error rate

- Document what changed and why

- Present outcomes in business terms (speed, quality, cost, risk)

Deliverable: a short internal case study showing concrete ROI.

If you can’t show measurable value, you’re still experimenting. If you can, you’re already operating at the next job level.

Final thought

AI didn’t erase human value. It changed where human value sits.

Execution is getting cheaper.

Clarity, orchestration, and judgment are getting more expensive.

If your current role is mostly execution, don’t panic — but don’t stay still either.

Rebuild your edge around those three skills, and you won’t just survive this shift. You’ll be one of the people defining how work gets done next.

Question for you: In your current job, which part is most exposed right now — execution, context design, or final judgment? I’d love to hear your real case.